There is a version of the peanut allergy story that goes like this: something mysterious happened to American children starting in the 1990s. Peanut allergies exploded. Nobody knows why. Probably some combination of hygiene, genetics, and modern living. The epidemic is a puzzle.

That version is wrong. Or at least, it’s missing the most important part. The peanut allergy epidemic in the United States was substantially caused by the medical establishment’s own advice. Doctors told parents to keep peanuts away from babies. Parents listened. Allergies tripled. Then doctors reversed themselves, told parents to do the opposite, and allergies dropped 43%. This is not a mystery. It is a case study in how expert consensus, built on no evidence, can create the exact problem it claims to prevent.

the timeline nobody talks about

Before the 1990s, peanut allergies were barely a thing. They existed, but they were rare enough that medical literature and media almost never mentioned them before 1980. Parents in the 1960s and 1970s fed their babies peanut butter whenever they started solid foods, often at a few months old. Nobody thought twice about it. Food allergy prevalence was very low.

Then the sequence of events that created the epidemic began. In 1998, the UK issued guidance that high-risk infants should avoid peanuts. In 2000, the American Academy of Pediatrics followed with a policy statement recommending that high-risk infants avoid peanuts until age three, eggs until age two, and dairy until age one.

Here is the critical detail. There was no study supporting this recommendation. None. It was based on physician guesswork. As Dr. Jonathan Spergel, head of allergy at Children’s Hospital of Philadelphia, later put it:

“Back then, we gave people really bad advice. We told people to avoid food allergens. But there was never any evidence for this. It was just based on a few physicians’ best guess. There’s never been any study that ever proved that food avoidance worked.”

The recommendation was narrow, aimed at high-risk infants, but it spread far beyond its intended scope. Pediatricians handed out pamphlets telling all parents to delay peanut introduction. Schools banned peanuts. Airlines stopped serving them. The cultural message was clear and terrifying: peanuts were dangerous, keep them away from children.

Between 1997 and 2008, the rate of peanut allergy in American children tripled from 0.4% to 1.4%. By 2008, an estimated 1.8 million kids in the U.S. had a peanut allergy. That is larger than the population of Philadelphia.

the israeli clue

While American and British children were developing peanut allergies at accelerating rates, Israeli children were essentially immune. The peanut allergy rate in Israel was roughly one-tenth the rate in the UK.

The reason was hiding in plain sight. Israeli babies eat a peanut snack called Bamba starting at around four months old. About 90% of Israeli families buy it regularly. It is essentially a cheese puff made with peanut butter instead of cheese, and it dissolves in the mouth, making it safe for infants. Israeli parents were not doing anything sophisticated. They were just feeding their kids a cheap corn puff. And their kids were not developing peanut allergies.

This was the observation that launched the most important allergy study in decades. Dr. Gideon Lack at King’s College London noticed the discrepancy between Israeli and British children with similar genetic backgrounds. He hypothesized that early peanut exposure was protective, not dangerous. The opposite of what every Western medical authority was telling parents.

the study that reversed everything

The LEAP study, published in the New England Journal of Medicine in 2015, enrolled 640 high-risk infants between 4 and 11 months old. Half were fed peanut products regularly. Half avoided peanuts entirely. At age five, they were all tested.

The results were not subtle.

Of the children who avoided peanuts, 17% developed a peanut allergy. Of the children who ate peanuts regularly, only 3% did. That is an 80% reduction in allergy risk from doing the opposite of what doctors had been telling parents for fifteen years.

For infants who already showed mild sensitivity to peanuts, the results were even more dramatic. In the avoidance group, 35.3% became allergic. In the consumption group, 10.6%.

These were kids who were already trending toward allergy, and simply eating the thing they were supposedly allergic to cut their risk by 70%.

Dr. Lack’s conclusion was blunt:

“For decades allergists have been recommending that young infants avoid consuming allergenic foods such as peanut to prevent food allergies. Our findings suggest that this advice was incorrect and may have contributed to the rise in the peanut and other food allergies.”

the reversal and the proof

In 2008, the AAP had already quietly rescinded its 2000 guidance, acknowledging that delaying peanut introduction did not protect against allergies.

After the LEAP study, new guidelines came out in 2015 and 2017 recommending early peanut introduction for all risk levels. By 2021, major medical organizations were telling parents to introduce peanut, egg, and other allergens at four to six months.

The reversal worked.

A 2025 study from Children’s Hospital of Philadelphia, analyzing over 120,000 children, found that peanut allergy rates dropped 27% after the 2015 guidelines and 43% after the 2017 addendum. Overall food allergy diagnoses fell by nearly 40%. Peanut dropped from the most common food allergen in young children to the second most common, surpassed by egg.

The speed of the decline roughly mirrors the speed of the increase. It took about a decade for avoidance advice to triple allergy rates. It is taking about a decade for the reversal to cut them nearly in half.

The symmetry is hard to explain by any mechanism other than: the advice itself was a major driver of the epidemic.

the uncomfortable pattern

What makes the peanut allergy story uncomfortable is not that doctors were wrong. Doctors are wrong all the time. Medicine advances by being wrong and correcting itself.

What makes it uncomfortable is the specific structure of the error.

The AAP issued a recommendation based on no evidence. The recommendation, once absorbed by a terrified parent culture, expanded far beyond its narrow intended audience. The expanded version created a massive increase in the exact condition it was trying to prevent.

And the correction, when it finally came, required not just new evidence but a complete philosophical reversal: stop avoiding the allergen and start feeding it to babies.

That structure — a precautionary recommendation with no evidence base that creates the problem it predicts — shows up in other places. But it is unusually clean in the peanut case because the natural experiment exists.

Israel never followed the avoidance advice. Israeli babies ate Bamba. Israeli children did not get peanut allergies.

The control group was always there, eating peanut corn puffs on a kibbutz, and nobody in American or British medicine paid attention until the gap became too large to ignore.

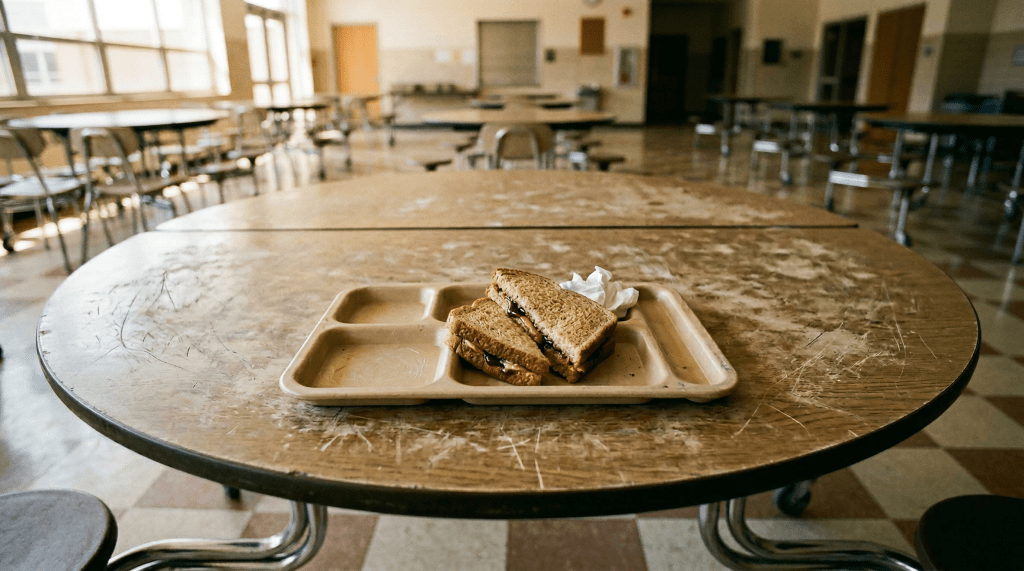

There is also the question of what to do with the social infrastructure that grew around the epidemic. Peanut-free school zones. Airline bans. EpiPen prescriptions. The entire apparatus of peanut fear.

As Princeton researcher Miranda Waggoner documented, the societal response to peanut allergies grew far out of proportion to the actual risk. As one physician noted, roughly the same number of people die from peanut allergies each year as from lightning strikes.

A food-allergic person in the U.S. is statistically more likely to be murdered than to die from an allergic reaction.

None of which diminishes the real terror of anaphylaxis for the families who deal with it. But it does suggest that the policy response was shaped more by fear than by probability.

what is still unknown

The avoidance-to-introduction reversal does not explain everything.

Peanut allergies were already rising slightly before the 2000 AAP guidelines, which means something else was contributing.

The hygiene hypothesis — the idea that modern cleanliness leaves immune systems without enough to fight and they turn on harmless proteins — has real evidence behind it.

Vitamin D deficiency, which has roughly doubled in the U.S. in recent decades, may play a role.

There is evidence that sensitization can occur through the skin, meaning that babies exposed to peanut proteins on surfaces but not in food may develop allergies through the wrong pathway.

But the avoidance advice was clearly the accelerant.

The epidemic’s timing matches the advice almost perfectly.

The reversal’s timing matches the correction almost perfectly.

And the countries that never followed the advice, Israel most prominently, never had the epidemic.

The question worth asking is not just about peanuts.

It is about the general pattern.

How many other conditions are we managing with precautionary advice that has no evidence base? How would we know if the advice itself was creating the problem?

The peanut case was detectable because a natural control group existed and because the effect was large and fast.

But if the effect were smaller, or slower, or if no country happened to do the opposite, we might still be telling parents to avoid peanuts.

We might still be watching the epidemic grow.

And we might still be calling it a mystery.